GenAI Studio: News, Tools, and Teaching & Learning FAQs

These sixty minute, weekly sessions – facilitated by Technologists and Pedagogy Experts from the CTLT – are designed for faculty and staff at UBC who are using, or thinking about using, Generative AI tools as part of their teaching, researching, or daily work. Each week we discuss the news of the week, highlight a specific tool for use within teaching and learning, and then hold a question and answer session for attendees.

They run on Zoom every Wednesday from 1pm – 2pm and you can register for upcoming events on the CTLT Events Website.

News of the Week

Each week we discuss several new items that happened in the Generative AI space over the past 7 days. There’s usually a flood of new AI-adjacent news every week – as this industry is moving so fast – so we highlight news articles which are relevant to the UBC community.

In this week’s tech news, NVIDIA releases a family of frontier-class, multimodal large language models (LLMs) that perform exceptionally on vision-language tasks. Liquid AI launches Foundation Models (LFM), designed for high quality results while “maintaining a smaller memory footprint and more efficient inference”. OpenAI has announced its Dev Day, with four new products in the API lined up. Furthermore, OpenAI bets that AI-powered assistants will become mainstream by 2025. Finally, Microsoft has releases a paper that considers methods to integrate external data to LLMs using techniques such as Retrieval Augmented Generation (RAG) or fine-tuning.

Here’s this week’s news:

NVIDIA Unveils NVLM 1.0 Family

NVIDIA has introduced the NVLM 1.0 family, a set of powerful multimodal large language models designed for both vision-language tasks and text-only tasks. The flagship model, NVLM-D-72B, with 72 billion parameters, competes with leading AI systems like GPT-4 and Google’s models, offering enhanced performance across various benchmarks, particularly in vision-language tasks. NVLM model weights and training code are open-source, marking a significant step toward more open AI development.

Liquid AI Launches Foundation Models

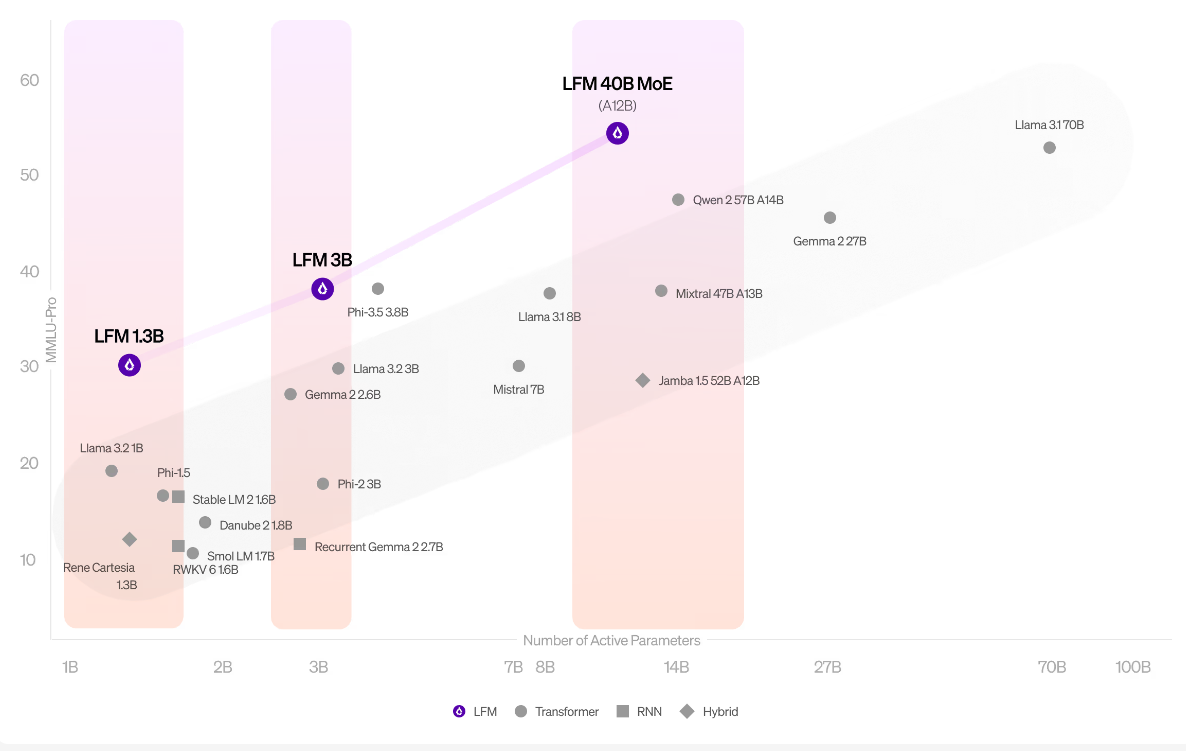

Liquid AI has released its Foundation Models, offering organizations customizable, pre-trained AI models that can be fine-tuned for various tasks. These models aim to provide enterprises with a scalable solution for AI implementation across industries, focusing on reducing memory footprint and increasing inference efficiency. Their three models with 1.3B, 3B, and 40B active parameters are presented to have the best performance/size tradeoffs in their respective categories.

Explore Liquid Foundation Models here.

OpenAI Dev Day

OpenAI recently had their inaugural Dev Day, showcasing new tools and features, offering deep dives into OpenAI’s latest technologies, including updates to ChatGPT and API integrations. OpenAI has announced four products in the API: Realtime API for integrating faster speech-to-speech performances, Vision fine-tuning to improve models using images and text, Prompt Caching to retain and reduce cost on repeatedly received inputs, and Model Distillations to use outputs from a larger model to fine-tune smaller models.

Learn more about OpenAI Dev Day and its API products here.

OpenAI Bets on AI Agents Becoming Mainstream by 2025

OpenAI is predicting that AI-powered assistants, or “AI agents,” will become mainstream by 2025. These agents, designed to reason and handle complex tasks, are the focus of competition between tech giants like Google and Apple. OpenAI’s Chief Product Officer, Kevin Weil, highlighted the potential for these systems to interact like humans. At OpenAI’s recent developer day, the company showcased its new “o1” model series and enhanced voice capabilities in GPT-4, allowing real-time interaction via voice commands, much like a live conversation.

ChatGPT Aids Brain Tumor Detection

A study has demonstrated that ChatGPT can assist in diagnosing brain tumors, providing diagnostic support that aligns with expert radiologists. ChatGPT recorded a 73% accuracy for diagnosing brain tumor MRI reports, and an even higher 80% accuracy when given reports written by neuroradiologists, which surpasses the accuracy of neuroradiologists (72%) and general radiologists (68%). The AI-driven analysis suggests that models like ChatGPT could play a vital role in medical imaging and cancer detection, highlighting the potential for AI in healthcare advancements.

Microsoft Releases Paper on Integrating External Data to LLMs

This paper by Microsoft offers a detailed review of techniques for integrating external data with large language models (LLMs). It categorizes user queries into different types, focusing on the challenges and strategies for retrieving relevant data. The survey highlights methods like context integration, small model use, and fine-tuning for optimizing LLM applications. The goal is to help developers tackle key issues in data-augmented LLM tasks.

Tool of the Week

Tool of the Week: Pika AI

What is Pika AI?

Pika is an AI-powered design platform designed to help users create art and graphics more efficiently. The tool leverages machine learning to allow users to generate, customize, and enhance their creative projects with minimal manual input. Pika can generate both video and audio from its given prompts.

How is it used?

Users can input design parameters or images, and Pika uses AI to generate artistic elements or full designs. It provides customizable options, allowing for adjustments in color, style, and form. Users can also generate videos of effects being applied, such as crushing, melting, and inflating an object. This makes it a useful tool for both professional designers and hobbyists seeking to speed up their workflow.

What is it used for?

Pika is ideal for graphic design tasks such as logo creation, branding, or digital illustrations. By incorporating AI, it enables faster ideation and allows creatives to explore multiple variations of a concept quickly, making it a valuable resource for design studios or independent artists.

For more information, explore Pika AI.

Without a PIA, instructors cannot require students use the tool or service without providing alternatives that do not require use of student private information

Questions and Answers

Each studio ends with a question and answer session whereby attendees can ask questions of the pedagogy experts and technologists who facilitate the sessions. We have published a full FAQ section on this site. If you have other questions about GenAI usage, please get in touch.

-

Assessment Design using Generative AI

Generative AI is reshaping assessment design, requiring faculty to adapt assignments to maintain academic integrity. The GENAI Assessment Scale guides AI use in coursework, from study aids to full collaboration, helping educators create assessments that balance AI integration with skill development, fostering critical thinking and fairness in learning.

-

How can I use GenAI in my course?

In education, the integration of GenAI offers a multitude of applications within your courses. Presented is a detailed table categorizing various use cases, outlining the specific roles they play, their pedagogical benefits, and potential risks associated with their implementation. A Complete Breakdown of each use case and the original image can be found here. At […]